You caught Pidgeys. You raided gyms. You walked circles around your neighborhood at 11 PM spinning Pokéstops like it was a perfectly normal thing to do.

What you didn’t know – what nobody told you – is that every time you pointed your phone at a church, a fountain, a mural, or a storefront to scan a Pokéstop, you were doing something far more significant than earning XP.

You were training the brain of a robot.

The 10-Year Setup Nobody Saw Coming

Niantic launched Pokémon GO in July 2016. Within 60 days, 500 million people had installed it. At its peak, the game had players walking millions of real-world miles every day, phone cameras up, scanning the physical world around them.

For Niantic, this wasn’t just a game. It was the largest crowdsourced geospatial data collection operation in human history – and most players had no idea it was happening.

Over the next decade, Niantic quietly accumulated 30 billion images from players worldwide. Not from satellite cameras. Not from fleets of Street View cars. From regular people, phones in hand, scanning bus stops and temples and coffee shops and parks in cities across every continent.

Each scan wasn’t just a photo. It came loaded with metadata – where the phone was in space, which direction it was facing, whether it was moving, how fast, and which way it was tilted. Combined with GPS coordinates and timestamps, every image became a precisely located data point in an increasingly detailed neural map of the real world.

What “Scanning a Pokéstop” Actually Did

When Niantic introduced the Pokéstop scanning feature, it was framed as a way to improve AR experiences and earn in-game bonuses. Players would walk up to a landmark and sweep their phones around it in a circle.

Behind the scenes, something more sophisticated was being built.

For each of its million-plus mapped locations worldwide, Niantic ended up with thousands of images of the same spot – shot at different angles, different times of day, different weather conditions, different seasons. Sunrise. Midnight. Rain. Snow. Summer. A single Pokéstop might have been photographed in clear morning light by a commuter, in foggy dusk by a teenager, and in the middle of a snowstorm by someone who really needed those Stardust.

No camera car can do that. No satellite can do that. No government mapping program can replicate that kind of environmental diversity at that scale – because those programs don’t have hundreds of millions of motivated humans doing the legwork for free.

The result is a dataset that is genuinely one-of-a-kind on Earth.

The Technology That Came Out of It: The LGM

In late 2024, Niantic unveiled what it had been building with all of that data: a Large Geospatial Model, or LGM.

Think of it as a spatial equivalent of ChatGPT. Just as a Large Language Model (LLM) learns to understand and generate human language by training on billions of text examples, Niantic’s LGM learns to understand and navigate physical space by training on billions of real-world images – all precisely tagged with location data.

The result is a system that can look at a single image from a phone camera and tell you exactly where in the world you are – not within a few meters like GPS, but within a few centimeters.

To do this, Niantic developed what it calls a Visual Positioning System (VPS). As part of building it, the company trained more than 50 million individual neural networks, totaling over 150 trillion parameters – all dedicated to understanding specific physical locations around the world.

But the LGM goes even further than just matching images to known locations. Because it has seen thousands of views of the same place from different angles, it can do something remarkable: infer what’s around the corner even when it has no image of it.

As Niantic Spatial’s CTO Brian McClendon explained it: a system that walks down a hallway one way should be able to figure out how to make its way back – even without having seen it from the return angle. The LGM makes this possible by learning generalizable principles of how physical spaces are structured. It has seen enough churches, archways, intersections, and storefronts across the world that it can extrapolate what a new, unscanned side of a building probably looks like – because it has learned what buildings like that generally look like.

The Twist: They Sold the Games and Kept the AI

Here’s where it gets interesting.

In March 2025, Niantic announced it was selling its entire games division – Pokémon GO, Pikmin Bloom, Monster Hunter Now – to video game company Scopely for $3.5 billion. The deal closed in May 2025.

Niantic didn’t sell because the games were failing. It sold because the games had already done their job.

The company spun off as Niantic Spatial, keeping the technology, the maps, the neural networks, and those 30 billion images. The Pokéstops, the raids, the walking – all of it was infrastructure in service of a much bigger ambition.

Niantic Spatial’s goal is no longer to entertain you. It’s to give machines the ability to understand the physical world.

Delivery Robots Are Already Using It

The first major real-world deployment came through a partnership with Coco Robotics, a startup operating last-mile delivery robots on sidewalks across US and European cities.

The problem Coco faced is one that GPS simply cannot solve. In dense urban environments, GPS signals bounce off skyscrapers and can drift by 50 meters or more – enough to send a robot into the wrong lane, block a doorway, or miss a pickup spot entirely. For precision robotics operating among pedestrians, that margin of error is unacceptable.

Niantic Spatial’s VPS fixes this. Using the LGM-powered positioning system, Coco’s robots can now place themselves within centimeters of their target location – stopping precisely at a restaurant’s pickup spot without blocking foot traffic, and pulling up right at a customer’s door rather than a few steps away.

The robots navigate using the same visual data that Pokémon GO players generated. That coffee shop a delivery bot stops at today was almost certainly scanned by a Pokémon trainer hunting a Snorlax three years ago.

The reCAPTCHA Comparison Is Unavoidable

Anyone who has spent time following the history of tech data collection will recognize the playbook immediately.

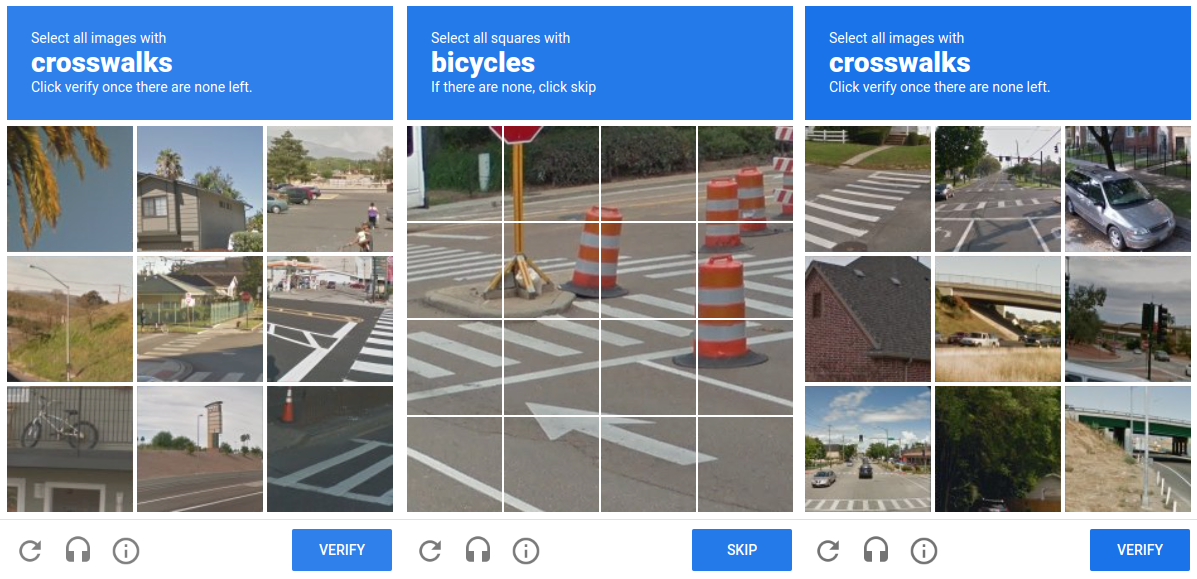

For years, Google’s reCAPTCHA system asked internet users to prove they weren’t robots by identifying fire hydrants, traffic lights, and crosswalks in grainy photos. Most people didn’t know they were labeling training data for self-driving car systems. They thought they were just logging in.

Niantic’s approach followed a similar structure. Players were incentivized with in-game rewards to scan real-world landmarks. The scanning was technically optional. But “optional” carries a lot of weight when it’s tied to progression in a game you’re already deeply invested in.

Niantic has pushed back on this framing, emphasizing that scanning requires active user participation and that the company’s data collection processes are privacy-compliant. That is likely true in a legal sense.

Whether players understood the broader commercial and technological context of what they were contributing to is a different question – one that the tech industry has still not fully resolved.

What Comes Next: A Living Map of the World

Niantic Spatial’s long-term vision is something it calls a living map – a hyper-detailed, continuously updated digital simulation of the physical world that evolves in real time as the world itself changes.

Coco’s delivery robots aren’t just consumers of this map. As they move through city streets, they generate new data that feeds back into it, making it progressively more accurate and more detailed over time. More robots from more companies will eventually do the same.

The company has also announced partnerships with aerospace firm Aechelon Technology to enhance US military training simulations, and with legendary game creator Hideo Kojima to build real-world immersive entertainment experiences. The same positioning technology that helps a robot find a front door can anchor a virtual character to a physical street corner in ways that feel genuinely real.

The LGM also has a semantic layer – not just knowing where things are, but what they are. Niantic Spatial’s system can classify environments at the pixel level, identifying ground, sky, walls, doorways, and more than 200 types of physical objects in real time. This is the kind of contextual understanding that autonomous systems – robots, AR glasses, self-driving vehicles – need to operate safely alongside humans.

The Bigger Picture

What Niantic has pulled off is, by any measure, an extraordinary feat of data engineering.

They built a product that people genuinely loved. They made it location-based, so players naturally distributed themselves across cities and towns around the world. They built an in-game incentive for players to scan specific landmarks with precision.

They ran this operation for a decade, long enough to accumulate the kind of temporal diversity – different times, seasons, weather, lighting conditions – that no paid data collection effort could realistically replicate.

And they did it while the world watched and thought they were just catching Pokémon.

The future of robotics, AR glasses, autonomous navigation, and spatial AI wasn’t built in a sterile lab. It was built outside a coffee shop in Mumbai, beside a temple in Tokyo, next to a post office in São Paulo, and on a street corner in Chicago – by people who just wanted to catch a shiny Charizard.

You played a game. You built a world model.

The robots are grateful.